How to get your legal team to approve using AI in recruiting

3 risks that spook the legal teams and how to address them

Like all teams, recruiting teams are excited about using AI, but Legal and Compliance departments at larger companies are saying:

Hold on. Not so fast.

While it’s tempting to see Legal as the “Department of No,” their hesitation usually stems from a valid concern:

To gain $1 efficiency, we can’t lose $10 + reputation.

To get legal teams to say yes, you need to understand the core issues they make them nervous.

3 risks that make legal teams uncomfortable about AI in recruiting

1️⃣ AI that works like a black-box

If AI tools

scrape external data

analyze social profiles

infer personality or behavioral traits

build hidden candidate scores

Legal teams see a huge unknown risk.

Why? Because if a candidate challenges a hiring decision, the company must be able to explain what signals influenced that decision.

Recent lawsuits involving AI hiring platforms — including cases against companies like Eightfold and Workday — have raised exactly this question: Are AI systems creating undisclosed profiles or scores about candidates that employers themselves cannot fully explain?

For legal teams, this becomes a major exposure.

How to avoid this risk

AI should analyze only what candidates intentionally provide — resumes, assessments, or application responses.

If a candidate asks “What data was used and how?” the recruiting team should be able to provide an accurate answer, not a mysterious algorithmic score.

2️⃣ AI that rejects candidates on its own

If AI tools:

automatically filter out candidates

reject applicants before a recruiter sees them

make hiring decisions based purely on algorithmic scoring

Legal teams see a major accountability risk.

Why? Because when a candidate challenges a rejection, the company must be able to show that a human reviewed the decision and applied fair judgment.

Courts and regulators are increasingly questioning hiring systems that fully automate screening decisions without meaningful human oversight.

How to avoid this risk

AI should help humans make better decisions, not make those decisions on their behalf.

Use AI to highlight strong matches, summarize candidate information, and accelerate search — but ensure a recruiter makes the final decision to reject or move forward.

3️⃣ No choice of opting out of AI features

There will always be features that a specific company may find risky to use.

For e.g.

Searching on AI derived attributes like “job stability” or “academic brilliance”

Evaluating profiles on softer skills like “leadership experience”

While statups may love these features, an enterprise may want to opt out of these features.

Why? Because if a tool behaves unexpectedly — for example introducing bias or prioritizing the wrong signals — the organization may be legally exposed.

How to avoid this risk

Look for AI products that allow opting out of certain features your legal team is not comfortable with.

Teams should be able to see which signals the AI prioritizes, review candidates beyond AI rankings, and adjust the system to align with their hiring policies and goals.

The organization should always own the process — not the algorithm.

AI vendors need to play an active role here

AI systems in recruiting don’t just one responsibility toward their customers. They also have a bigger responsibility: towards millions of people in the labor market.

A job supports their livelihood, their families, and often defines important parts of their life and identity. If AI systems make or influence decisions in ways that are opaque or poorly designed, it can severely impact their life.

That’s why these guardrails should not be treated as compliance hurdles. They should be core product design principles.

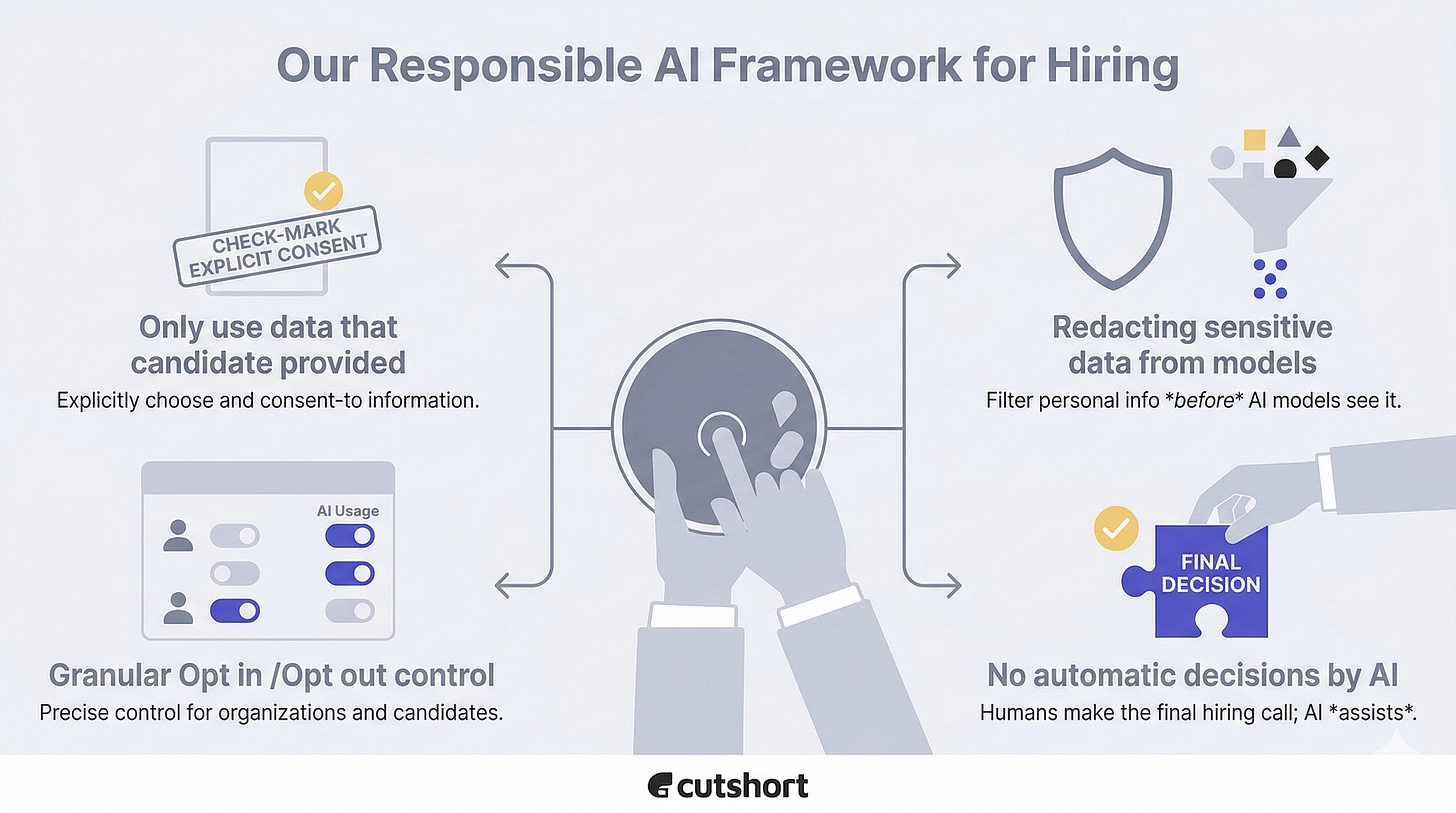

At Cutshort, we did exactly this and have documented our approach to Responsible AI in hiring here:

👉 https://cutshort.ai/responsible-ai

Not perfect and always improving, but we know its importance and are committed to enabling our users with AI in a way which is fair and safe for everyone.

How is your team balancing AI speed with human oversight? I’d love to hear your thoughts in the comments.